Ars Technica

Not to be left out of the rush to integrate generative AI into search, on Wednesday DuckDuckGo announced DuckAssist, an AI-powered factual summary service powered by technology from Anthropic and OpenAI. It is available for free today as a wide beta test for users of DuckDuckGo’s browser extensions and browsing apps. Being powered by an AI model, the company admits that DuckAssist might make stuff up but hopes it will happen rarely.

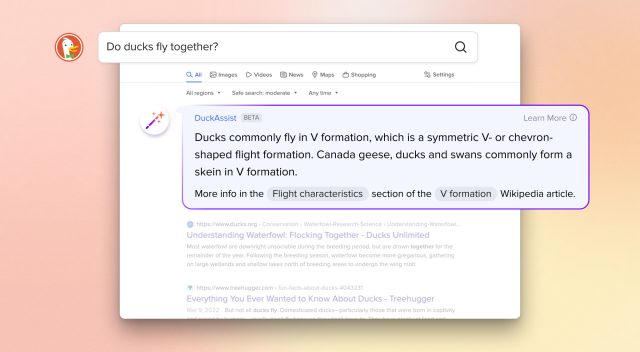

Here’s how it works: If a DuckDuckGo user searches a question that can be answered by Wikipedia, DuckAssist may appear and use AI natural language technology to generate a brief summary of what it finds in Wikipedia, with source links listed below. The summary appears above DuckDuckGo’s regular search results in a special box.

The company positions DuckAssist as a new form of “Instant Answer”—a feature that prevents users from having to dig through web search results to find quick information on topics like news, maps, and weather. Instead, the search engine presents the Instant Answer results above the usual list of websites.

DuckDuckGo

DuckDuckGo does not say which large language model (LLM) or models it uses to generate DuckAssist, although some form of OpenAI API seems likely. Ars Technica has reached out to DuckDuckGo representatives for clarification. But DuckDuckGo CEO Gabriel Weinberg explains how it utilizes sourcing in a company blog post:

DuckAssist answers questions by scanning a specific set of sources—for now that’s usually Wikipedia, and occasionally related sites like Britannica—using DuckDuckGo’s active indexing. Because we’re using natural language technology from OpenAI and Anthropic to summarize what we find in Wikipedia, these answers should be more directly responsive to your actual question than traditional search results or other Instant Answers.

Since DuckDuckGo’s main selling point is privacy, the company says that DuckAssist is “anonymous” and emphasizes that it does not share search or browsing history with anyone. “We also keep your search and browsing history anonymous to our search content partners,” Weinberg writes, “in this case, OpenAI and Anthropic, used for summarizing the Wikipedia sentences we identify.”

If DuckDuckGo is using OpenAI’s GPT-3 or ChatGPT API, one might worry that the site could potentially send each user’s query to OpenAI every time it gets invoked. But reading between the lines, it appears that only the Wikipedia article (or excerpt of one) gets sent to OpenAI for summarization, not the user’s search itself. We have reached out to DuckDuckGo for clarification on this point as well.

DuckDuckGo calls DuckAssist “the first in a series of generative AI-assisted features we hope to roll out in the coming months.” If the launch goes well—and nobody breaks it with adversarial prompts—DuckDuckGo plans to roll out the feature to all search users “in the coming weeks.”

DuckDuckGo: Risk of hallucinations “greatly diminished”

As we’ve previously covered on Ars, LLMs have a tendency to produce convincing erroneous results, which AI researchers call “hallucinations” as a term of art in the AI field. Hallucinations can be hard to spot unless you know the material being referenced, and they come about partially because GPT-style LLMs from OpenAI do not distinguish between fact and fiction in their datasets. Additionally, the models can make false inferences based on data that is otherwise accurate.

On this point, DuckDuckGo hopes to avoid hallucinations by leaning heavily on Wikipedia as a source: “by asking DuckAssist to only summarize information from Wikipedia and related sources,” Weinberg writes, “the probability that it will “hallucinate”—that is, just make something up—is greatly diminished.”

While relying on a quality source of information may reduce errors from false information in the AI’s dataset, it may not reduce false inferences. And DuckDuckGo puts the burden of fact-checking on the user, providing a source link below the AI-generated result that can be used to examine its accuracy. But it won’t be perfect, and CEO Weinberg admits it: “Nonetheless, DuckAssist won’t generate accurate answers all of the time. We fully expect it to make mistakes.”

As more firms deploy LLM technology that can easily misinform, it may take some time and widespread use before companies and customers decide what level of hallucination is tolerable in an AI-powered product that is designed to factually inform people.